ChatGPT Detector Arrives: 100% Recognition of AI-Written Papers

ChatGPT Detector Arrives: 100% Recognition of AI-Written Papers

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

ChatGPT Detector Arrives: 100% Recognition of AI-Written Papers

ChatGPT, a chatbot based on the Large Language Model (LLM) released by the OpenAI research lab on November 30, 2022, is capable of engaging in conversations by learning and understanding human language. It can interact based on the context of the conversation, making it chat like a human.

Since its launch, ChatGPT’s remarkable abilities have garnered significant attention.

Some published papers have shown that ChatGPT can generate fraudulent scientific papers that appear highly realistic, raising concerns about the integrity of scientific research and the credibility of published papers.

Renowned academic watchdog, Elisabeth Bik, expressed concerns that the rapid rise of ChatGPT and other generative AI tools will aid paper mills, exacerbating academic misconduct issues. She stated, “I am very concerned that we now have a substantial number of papers that we cannot distinguish.”

On November 6, 2023, researchers from the University of Kansas published a research paper titled “Accurately detecting AI text when ChatGPT is told to write like a chemist” in the Cell Reports Physical Science sub-journal.

This research developed a machine learning-based tool called the “ChatGPT Detector,” which distinguishes between human and AI authors based on writing style characteristics. It can accurately identify papers generated by artificial intelligence, including the latest ChatGPT-4, with unprecedented precision.

In June of this year, Heather Desaire and her team first described their ChatGPT Detector, which utilized pre-existing machine learning to examine 20 writing style features, including variations in sentence length, the frequency of specific words and punctuation usage, to determine whether an article was authored by an academic scientist or ChatGPT. The results showed that this detector can achieve extremely high accuracy using a set of writing style features.

In this recently published paper, the research team trained the ChatGPT Detector using introductions from papers in ten chemistry journals published by the American Chemical Society (ACS). After training on introductions from 100 published papers, ChatGPT-3.5 was required to write 200 introductions in the style of ACS journals, with 100 based on paper titles and 100 based on paper abstracts.

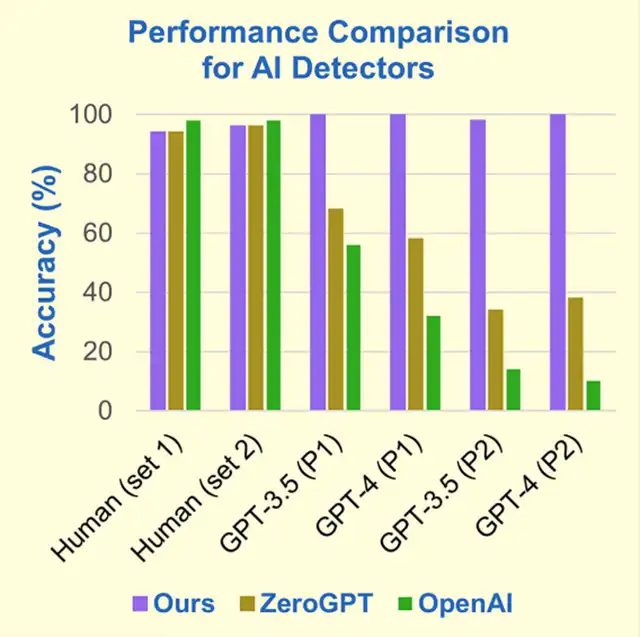

When comparing introductions written by humans and ChatGPT-3.5 from the same journal, if the introduction was based on the paper title, the ChatGPT Detector was able to identify which ones were authored by ChatGPT-3.5 with 100% accuracy. If the introduction was based on the paper abstract, the detection accuracy was slightly lower at 98%. Furthermore, the ChatGPT Detector’s performance in recognizing text written by ChatGPT-4, the latest version, was equally impressive.

In contrast, the AI detector ZeroGPT had an accuracy of only 35%-65% in recognizing introductions written by ChatGPT, depending on the version of ChatGPT used (ChatGPT-3.5 or ChatGPT-4) and whether the introduction was based on the paper title or abstract.

The text classifier tool from OpenAI, the developers of ChatGPT, performed the worst, with an accuracy of only approximately 10%-55% in recognizing introductions written by AI.

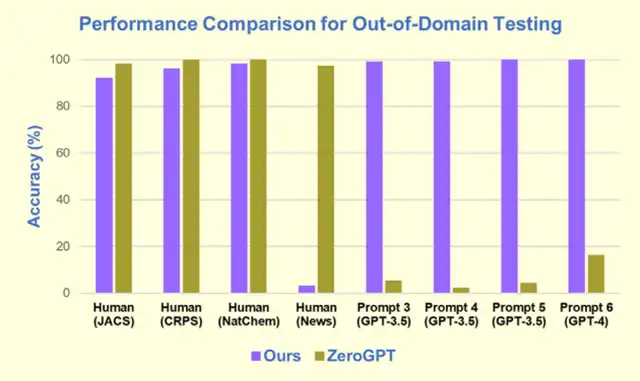

So, how does this ChatGPT Detector perform in recognizing papers from journals outside the training set?

The research team selected introductions from 150 papers, from different publishers, that were included in the previously mentioned training set.

These introductions were from Cell Press’s Cell Reports Physical Science, Nature Chemistry from the Nature Publishing Group, and the Journal of the American Chemical Society from the ACS.

The results showed that the ChatGPT Detector performed well in recognizing introductions from these untrained journals, with accuracy ranging from 92% to 98%.

Additionally, the ChatGPT Detector successfully identified AI-generated text created from various triggering words designed to confuse AI detectors.

However, it’s important to note that this ChatGPT Detector is highly specialized for scientific journal papers. When provided with actual newspaper articles from universities, it was unable to distinguish them as human-written.

Large language models like ChatGPT can rapidly generate highly realistic text, but many journal publishers refuse to consider ChatGPT and similar AI models as authors for papers. Therefore, there is an urgent need for an accurate method to differentiate between human-authored and AI-generated text.

The ChatGPT Detector developed in this study can accurately identify whether papers from scientific journals were authored by humans or AI, including the latest ChatGPT-4. Most importantly, it can accurately identify text generated with triggering words intended to deceive AI detectors.

Paper Links:

https://www.nature.com/articles/d41586-023-03479-4

https://doi.org/10.1016/j.xcrp.2023.101672

https://doi.org/10.1016/j.xcrp.2023.101426