DeepSeek Reveals Only US$294000 to build the R1 model

DeepSeek Reveals Only US$294000 to build the R1 model

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

DeepSeek Reveals Only US$294000 to build the R1 model

DeepSeek Reveals Ultra-Low Training Costs for R1 Model in Latest Research Paper

Chinese AI company demonstrates cost-effective approach to developing competitive large language models

Chinese artificial intelligence company DeepSeek has disclosed remarkably low training costs for its high-performance open-source model “R1,” revealing development expenses that dramatically undercut those of major U.S. AI companies, according to a research paper published on September 17, 2024.

Breakthrough Cost Efficiency

The company’s latest research reveals that building the R1 model cost just $294,000 – a figure that has stunned the AI industry where model development typically requires massive financial investments. This represents a significant reduction from DeepSeek’s previous “V3” model, comparable to Anthropic’s Claude, which required $5.6 million in training costs according to earlier company disclosures.

The cost differential becomes even more striking when compared to industry leaders like OpenAI, whose CEO Sam Altman has estimated that GPT-4 development exceeded $100 million. This disparity extends to consumer pricing, where DeepSeek charges $0.14 per million tokens (approximately 750,000 words of analysis) compared to OpenAI’s $7.50 for equivalent service tiers.

Technical Innovation Under Constraints

DeepSeek’s achievement is particularly noteworthy given the challenging operational environment faced by Chinese AI companies. U.S. export restrictions on advanced semiconductor technology have created significant competitive barriers, forcing Chinese firms to develop innovative approaches using less powerful hardware.

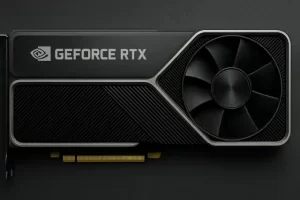

The company successfully leveraged strategic optimization of older-generation chips to achieve competitive performance. According to the research paper, R1 was built using 512 units of the China-specific NVIDIA H800 chips – processors with deliberately reduced capabilities compared to their U.S. counterparts.

Industry Impact and Investment Implications

The January release of DeepSeek’s R1 model sent shockwaves through the AI investment landscape, raising questions about the sustainability of current AI funding models. The demonstration that competitive AI models could be developed at a fraction of traditional costs has prompted investors to reconsider the economics underlying companies like OpenAI, which continues to seek additional funding of $40 billion despite not yet achieving profitability.

Ongoing Debate and Future Outlook

Some industry experts have raised questions about whether DeepSeek’s reported costs represent comprehensive development expenses including infrastructure, research and development, and data acquisition, or solely the final training execution phase. Regardless of the specific scope, the cost advantage remains substantial compared to established AI leaders.

Recent reports suggest DeepSeek may announce new models in the coming months, with the September research paper representing the company’s most significant disclosure since the January R1 release.

While DeepSeek’s cost-effective approach has challenged assumptions about AI development economics, the broader industry investment trend appears unlikely to slow immediately. Industry analysts project AI-related spending will reach $1.5 trillion in 2025, suggesting the current investment momentum will continue despite questions raised by DeepSeek’s breakthrough efficiency.