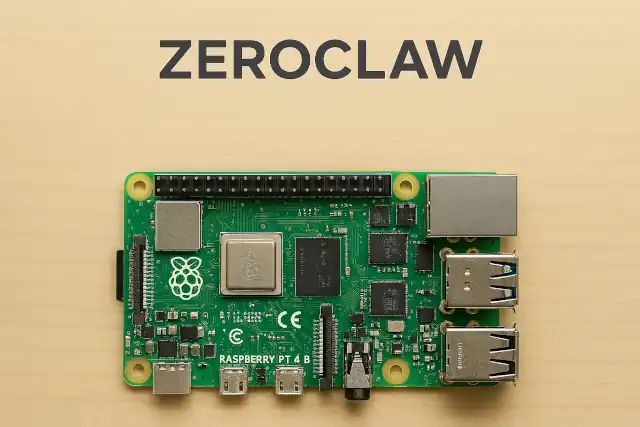

How ZeroClaw — a 3.4 MB Rust binary — is turning a $10 Raspberry Pi into a fully autonomous

How ZeroClaw — a 3.4 MB Rust binary — is turning a $10 Raspberry Pi into a fully autonomous

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

Zero Overhead.

Zero Compromise.

Deploy Anywhere.

How ZeroClaw — a 3.4 MB Rust binary — is turning a $10 Raspberry Pi into a fully autonomous, always-on AI agent capable of running Claude, DeepSeek, and Qwen without Node.js, Python, or compromise.

Most AI agents demand gigabytes of RAM, a Node.js runtime, and ideally a Mac mini tucked somewhere in a rack. ZeroClaw demands neither. Written entirely in Rust, compiled to a single static binary weighing just 3.4 MB, and booting in under 10 milliseconds, ZeroClaw is positioning itself as the definitive infrastructure layer for running autonomous AI agents at the absolute edge of hardware.

The project — maintained at github.com/zeroclaw-labs/zeroclaw — has attracted significant community attention in early 2026, spawning forks, companion projects, and a dedicated subreddit, while the official Raspberry Pi blog featured the concept of running AI agents on Pi hardware in February 2026.

What Is ZeroClaw?

ZeroClaw describes itself as “the runtime operating system for agentic workflows” — an infrastructure layer that abstracts models, tools, memory, and execution so that agents can be built once and run anywhere. In practical terms, it is an LLM client framework that runs on constrained hardware, connects to any major AI provider, and exposes a rich tool ecosystem for real-world automation.

At its core, every subsystem — AI providers, communication channels, tools, memory backends, tunnels, security policies — is defined as a Rust trait. This means any component can be swapped by changing a single line in a config file, with zero code changes and zero recompilation. Switch from OpenAI to a local Ollama model, or from SQLite memory to PostgreSQL, in seconds.

“ZeroClaw uses 4 MB of RAM. OpenClaw uses over 1 GB. That’s not an optimization — that’s a completely different class of software. Running it on my Raspberry Pi Zero with zero issues.”

— @rustacean_dev, via zeroclaws.io community testimonialsRaspberry Pi & Edge Hardware

ZeroClaw was explicitly designed for constrained environments. The framework runs comfortably on the Raspberry Pi Zero W, 3B, 4B, and 5 series, as well as on Orange Pi, Sipeed boards, and any Linux device with ARM, x86, or RISC-V architecture. Hardware costing as little as $10 is sufficient for a full autonomous runtime.

For Raspberry Pi deployments, ZeroClaw supports GPIO and hardware tool control, enabling the agent to physically interact with connected peripherals — toggling RGB LEDs, reading sensors, or triggering relays — all within the same ReAct agent loop that handles AI dialogue and scheduling.

The deployment model is intentionally minimal: USB power, a WiFi connection, and the ZeroClaw binary are all that’s required. No Docker. No Python virtualenv. No Node.js runtime eating 390 MB before your code even starts.

# Bootstrap script — handles Rust + ZeroClaw $ curl -fsSL https://raw.githubusercontent.com/zeroclaw-labs/zeroclaw/main/scripts/bootstrap.sh | bash # Interactive setup wizard $ zeroclaw onboard --interactive # Start full autonomous runtime $ zeroclaw daemon # Chat directly with Claude $ zeroclaw agent --provider anthropic -m "Hello, ZeroClaw!"

AI Providers & Communication Channels

ZeroClaw supports 22+ AI providers out of the box, including Anthropic (Claude), OpenAI, DeepSeek, Qwen (via OpenRouter or direct API), Ollama for local models, and any OpenAI-compatible endpoint. Multiple providers can be configured simultaneously, with runtime switching via the dual-Provider mechanism.

On the communication side, the project supports over 30 channels, enabling the agent to receive and send messages across virtually every major messaging platform:

WeChat Work integration is frequently mentioned in community discussions but should be independently verified against the current channel list, as support breadth evolves rapidly with new releases.

Persistent Memory System

ZeroClaw ships a custom-built memory engine that requires zero external dependencies — no Pinecone, no Elasticsearch, no Redis. Memory is handled locally through a hybrid retrieval system combining FTS5 keyword search (0.3 weight) and vector similarity scoring (0.7 weight), giving the agent both semantic recall and precise keyword lookup.

Three storage backends are available and swappable by config: SQLite (default, recommended for edge), PostgreSQL for server deployments, and flat Markdown files for human-readable, git-friendly storage of AI personality, user preferences, and long-term memory. All data is local, meaning no cloud dependency and no data loss on power failure or restart.

Tool Invocation & Autonomous Scheduling

In ReAct mode, ZeroClaw enters an Agent loop: perceive → reason → act → observe. The tool system includes shell/file/memory operations, cron and schedule management, Git integration, browser automation, HTTP requests, screenshot and image tools, Pushover notifications, hardware control (GPIO), and composio integration (opt-in). The delegate tool enables sub-agents and controlled recursion depth.

The built-in Cron scheduler and heartbeat mechanism allow the AI to create its own scheduled tasks and proactively execute them — checking weather, aggregating news, sending morning briefings, or monitoring services — without any human trigger.

Security Architecture

Security is a stated first-class concern. ZeroClaw defaults to a localhost-first network posture, requiring pairing-based access for any new connection. File access is workspace-scoped by default. Shell execution is gated on configured autonomy level. Three modes are available:

| Mode | Description | Autonomy |

|---|---|---|

| Readonly | Inspection and low-risk tasks only | Minimal |

| Supervised | Balances autonomy with human oversight on sensitive actions | Moderate |

| Full Autonomy | Broader execution for approved workflows and environments | High — use carefully |

Additional protections include a comprehensive argument sanitizer that blocks injection via shell metacharacters and backticks, symlink escape detection, explicit path allowlists, and rate limiting on tool invocations.

How ZeroClaw Compares

| Runtime | Language | Binary | RAM Usage | Dependencies |

|---|---|---|---|---|

| ZeroClaw | Rust | ~3.4 MB | <5 MB | None (static) |

| OpenClaw | Node.js | — | ~400+ MB | Node.js (~390 MB) |

| NanoBot | Python | — | ~200+ MB | Python runtime |

| PicoClaw | Go | ~small | ~10–20 MB | None (static) |

RAM figures are runtime measurements on release builds. Benchmark claims can drift with code evolution; always measure your current build locally.

Important: Official Repository & Impersonation Warning

The official ZeroClaw repository is github.com/zeroclaw-labs/zeroclaw and the official website is zeroclawlabs.ai. The project team has publicly warned that the domains zeroclaw.org and zeroclaw.net currently point to an unauthorized fork (openagen/zeroclaw) that is impersonating the official project. Do not trust binaries, fundraising announcements, or information from those sources. Use only the verified GitHub repository and official social accounts (@zeroclawlabs on X/Telegram/Reddit).

Claude Code OAuth tokens notice (February 19, 2026): Anthropic updated its Authentication and Credential Use terms. Claude Code OAuth tokens (Free, Pro, Max) are intended exclusively for Claude Code and Claude.ai. Using them in ZeroClaw or any third-party agent tool may violate the Consumer Terms of Service. Use a dedicated Anthropic API key for ZeroClaw deployments instead.

The Bottom Line

ZeroClaw represents a genuinely different philosophy for AI agent infrastructure: instead of abstracting away hardware constraints, it embraces them. A 3.4 MB binary, a sub-10ms cold start, and a memory footprint that fits in a few megabytes means that serious autonomous AI work is now possible on a device you can power from a USB port and forget about in a drawer.

The project is open-source under Apache 2.0, actively maintained, and building a growing community around edge AI deployment. For developers interested in running persistent, intelligent agents on constrained hardware — without cloud lock-in, without heavyweight runtimes, and without compromise — ZeroClaw is the most technically compelling option available today.