Samsung Announces HBM4e Memory for 2027: Bandwidth to Reach 3.25TB/s

Samsung Announces HBM4e Memory for 2027: Bandwidth to Reach 3.25TB/s

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

Samsung Announces HBM4e Memory for 2027: Bandwidth to Reach 3.25TB/s.

Next-generation memory promises massive performance leap for AI computing, but faces production challenges.

Samsung has unveiled plans to launch its next-generation HBM4e memory in 2027, marking a significant milestone in high-bandwidth memory technology.

The announcement comes as the company aims to reclaim leadership in the HBM market after falling behind competitor SK Hynix in recent generations.

Impressive Performance Specifications

The upcoming HBM4e memory boasts remarkable specifications that represent a substantial leap forward. With a pin speed of 13Gbps and a 2048-bit bus width, the memory will deliver bandwidth of 3.25TB/s—approximately 62% higher than the standard HBM4 specification.

To put this in perspective, the standard HBM4 specification calls for 8Gbps speeds and 2TB/s bandwidth. However, driven by NVIDIA’s requirements, actual production HBM4 modules have already been pushed to 11Gbps with 2.8TB/s bandwidth. Given that commercial release is still two years away, Samsung’s HBM4e speeds could potentially increase even further.

Energy Efficiency Gains

Beyond raw performance, Samsung has achieved significant improvements in power efficiency. The company reports that HBM4e will deliver double the energy efficiency of previous generations, consuming just 3.9 picojoules per bit—effectively cutting power consumption in half compared to HBM3e. This improvement is crucial as AI systems continue to scale, making power management increasingly critical.

Production Challenges Ahead

Despite the promising specifications, Samsung faces significant manufacturing hurdles. The company’s 1c DRAM chips used in HBM4e production currently suffer from yield rates below 50%, creating substantial cost pressures. Low yields typically translate to higher production costs, which could impact pricing and profitability.

Market Dynamics and NVIDIA’s Influence

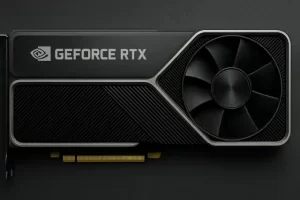

Perhaps the most critical factor in HBM4e’s commercial success will be its adoption by NVIDIA, which dominates the AI accelerator market. Samsung’s ability to secure orders from NVIDIA will largely determine the volume of HBM4e shipments. While AMD’s certification requirements are somewhat more lenient, AMD’s AI GPU sales remain significantly smaller compared to NVIDIA’s market-leading position.

Context: The HBM Evolution

High-bandwidth memory has become essential for AI computing as GPU performance continues to advance. Memory bandwidth increasingly represents a bottleneck in AI workloads, making HBM indispensable. The industry is preparing to transition to HBM4 next year, with HBM4e representing the generation beyond that.

Samsung lagged behind SK Hynix during the HBM3 and HBM3e eras but has been catching up with HBM4. With HBM4e, the company clearly aims to establish market leadership, explaining the aggressive performance targets and early announcement for a 2027 launch.

As AI computing demands continue to escalate, the race to develop faster, more efficient memory technologies shows no signs of slowing down. Whether Samsung can overcome its production challenges and secure major customers will determine if HBM4e becomes a turning point in the company’s HBM memory business.

What is HBM4e Memory?

HBM4e is the next evolution in High Bandwidth Memory (HBM) technology, specifically designed for high-performance computing applications like AI accelerators and advanced GPUs.

Understanding HBM

HBM (High Bandwidth Memory) is a type of memory that stacks multiple DRAM chips vertically and connects them directly to processors using extremely wide data buses. This architecture allows for much higher bandwidth compared to traditional memory designs.

The “4e” designation indicates this is an enhanced version of the fourth generation of HBM technology. In memory naming conventions, the “e” typically stands for “enhanced” or “extreme,” signifying improved specifications over the base standard.

Key Characteristics of HBM4e

Extreme Bandwidth: HBM4e will deliver data transfer rates of 3.25TB/s (terabytes per second), which is essential for feeding data to powerful AI processors that can quickly become bottlenecked by slower memory.

Vertical Stacking: Like other HBM generations, HBM4e uses 3D stacking technology to place multiple memory layers on top of each other, connected through microscopic vertical connections called TSVs (Through-Silicon Vias).

Wide Interface: The memory uses a 2048-bit wide bus—much wider than conventional memory’s 64-bit or 128-bit buses—allowing massive amounts of data to move simultaneously.

Energy Efficiency: Despite the high performance, HBM4e is designed to be power-efficient, consuming only 3.9 picojoules per bit of data transferred.

Why HBM4e Matters

As AI models grow larger and more complex, they require enormous amounts of data to be moved between memory and processors. Traditional memory simply can’t keep up with modern AI accelerators, making HBM technology critical for advancing AI computing capabilities. HBM4e represents the cutting edge of this technology, pushing the boundaries of what’s possible in memory performance.

Samsung Announces HBM4e Memory for 2027: Bandwidth to Reach 3.25TB/s