South Korean Low-Cost AI Chip to Challenge NVIDIA H100

South Korean Low-Cost AI Chip to Challenge NVIDIA H100

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

South Korean Low-Cost AI Chip to Challenge NVIDIA H100.

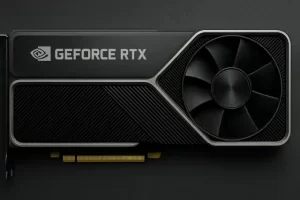

Hyper Accel’s 4nm LPU processor targets data center market at just 10% of H100 pricing.

A South Korean startup is preparing to shake up the AI chip market with an ultra-affordable processor designed specifically for large language models.

Hyper Accel, founded in January 2023, announced plans to launch its 4nm AI chip called the LPU (Language Processing Unit) next year, positioning it as a budget-friendly alternative to expensive GPU solutions.

Ambitious Pricing Strategy

The most striking feature of Hyper Accel’s offering is its aggressive pricing. The LPU will retail for approximately 5 million Korean won (roughly $3,750), representing just one-tenth the cost of NVIDIA’s flagship H100 data center GPU. This dramatic price difference could potentially democratize access to AI processing power for smaller companies and research institutions.

Technical Approach and Design Philosophy

The LPU processor employs a unique architecture specifically optimized for large language model workloads, focusing on low power consumption while maximizing memory bandwidth utilization. This targeted design approach aims to address two critical challenges facing AI data centers: excessive power consumption and memory bandwidth bottlenecks.

Rather than using expensive High Bandwidth Memory (HBM) like premium AI accelerators, Hyper Accel opted for LPDDR5X memory to maintain cost advantages while still achieving 90% accessible memory bandwidth levels. This engineering trade-off reflects the company’s priority of delivering competitive performance at a fraction of traditional costs.

Manufacturing and Timeline

The LPU will be manufactured using Samsung’s advanced 4nm process technology. According to the company, chip design has been completed and the processor is currently undergoing backend verification. Production is scheduled to begin next year, with market deployment expected by late 2025 for data center applications.

Market Context

This development aligns with South Korea’s broader ambitions to establish itself as the world’s third-largest AI hub. The country has been investing heavily in artificial intelligence infrastructure and capabilities, viewing the sector as crucial for future economic competitiveness.

While Hyper Accel has not disclosed specific performance metrics for the LPU, the startup has already attracted significant investment interest, securing 61 billion Korean won in funding by the end of 2023 despite being less than two years old.

The success of this venture could signal a shift in the AI chip landscape, potentially offering alternatives to the current market dominated by high-cost solutions and opening new possibilities for broader AI adoption across various industries.