World’s First Ethernet AI Rack Challenges NVIDIA

World’s First Ethernet AI Rack Challenges NVIDIA

- Why Enterprise RAID Rebuilding Succeeds Where Consumer Arrays Fail?

- Linus Torvalds Rejects MMC Subsystem Updates for Linux 7.0: “Complete Garbage”

- The Man Who Maintained Sudo for 30 Years Now Struggles to Fund the Work That Powers Millions of Servers

- How Close Are Quantum Computers to Breaking RSA-2048?

- Why Windows 10 Users Are Flocking to Zorin OS 18 Instead of Linux Mint?

- How to Prevent Ransomware Infection Risks?

- What is the best alternative to Microsoft Office?

World’s First Ethernet AI Rack Challenges NVIDIA

AMD and HPE Break NVIDIA’s AI Infrastructure Monopoly with World’s First Ethernet-Based AI Rack

Industry giants team up to challenge NVIDIA’s dominance in AI datacenter interconnect technology

In a significant development for the AI infrastructure market, AMD and Hewlett Packard Enterprise (HPE) have announced the world’s first AI rack system based on open Ethernet standards, directly challenging NVIDIA’s long-standing grip on high-performance AI datacenter networking.

CUDA Without NVIDIA: Microsoft’s Translation Layer Brings AI Models to AMD GPUs

Breaking the Interconnect Stranglehold

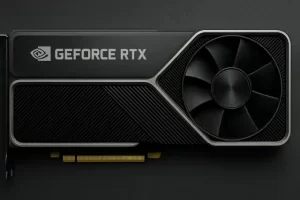

NVIDIA’s dominance in the AI accelerator market has rested on three pillars: raw GPU performance, the ubiquitous CUDA software ecosystem, and perhaps most critically, proprietary interconnect technology.

Through its NVLink architecture and the 2020 acquisition of Mellanox—which brought the high-speed InfiniBand networking technology—NVIDIA has maintained what industry observers describe as a “chokehold” on AI rack-level infrastructure.

Competitors have struggled to match the performance and integration of NVIDIA’s end-to-end solutions.

AMD’s new collaboration with HPE represents the first serious challenge to this technical moat.

IBM’s Quantum Leap: AMD Chips Run Error Correction 10x Faster Than Expected

The Helios System: Specs and Innovation

HPE’s new AI rack solution, built around AMD’s Helios platform, features 72 Instinct MI455X GPUs delivering 260 TB/s of bandwidth and 290 exaFLOPS of FP4 performance, making it suitable for both AI training and inference workloads.

However, the breakthrough isn’t just about computational power. The system’s most significant innovation lies in its networking architecture: it’s the first AI rack to use standard Ethernet protocols rather than proprietary interconnects.

The solution incorporates Juniper network switches—developed jointly by HPE and Broadcom—based on the Ultra Accelerator Link over Ethernet (UALoE) open standard. This architecture is designed to support the massive throughput requirements of large-scale AI models while maintaining compatibility with industry-standard networking.

OpenAI Partners with AMD for Massive 6 Gigawatt GPU Deployment

Strategic Implications

The move to open Ethernet standards could reshape the AI infrastructure landscape. NVIDIA’s proprietary approach has locked customers into its ecosystem, making it difficult and expensive to mix components from different vendors. An open standard potentially allows for greater flexibility, easier integration, and increased competition—factors that could drive down costs and accelerate innovation.

The timing is significant as enterprises and cloud providers are increasingly concerned about vendor lock-in and are seeking more diverse supply chains for AI infrastructure. Recent developments in the broader tech industry have heightened interest in open standards and interoperability.

AMD Claims Arm Architecture No Longer Holds Significant Advantage Over x86

Availability and Market Entry

The HPE-AMD Helios AI rack is scheduled for commercial release in 2026. HPE will offer it as a turnkey solution built on Open Compute Project (OCP) open rack standards, designed to simplify deployment for customers and partners while providing scalable, flexible infrastructure for demanding AI workloads.

Whether this Ethernet-based approach can truly match or exceed the performance of NVIDIA’s tightly integrated NVLink and InfiniBand solutions remains to be seen in real-world deployments. However, the announcement signals that the AI infrastructure market is entering a new phase of competition—one where open standards may challenge proprietary dominance, potentially benefiting customers with more choices and competitive pricing.

As AI workloads continue to grow in scale and complexity, the battle over interconnect technology may prove as consequential as the competition in GPU performance itself.